Everything seems to be AI for software engineering in January 2026. However, there are several points about this topic that do not converge to the same conclusion.

I’m no specialist; these are just random thoughts finally written down somewhere.

If you don’t want to read everything, you can skip to the conclusion (TL; DR).

No Profit

Until this moment I don’t see any company that profits from AI coding agents. Claude Code, Cursor, Codex, Gemini, Copilot… the list goes on, but every company is betting and competing to see who can perform better.

Just to clarify, I’m not against using them. For now, in my daily tasks I’m currently using Cursor with Claude Opus 4.6 and it’s working wonderfully. However, I’m not seeing how this is going to work in the long term. It is like the first unicorns in Silicon Valley around 2010 when they were burning venture/investor cash as if the world would end the next week.

Anthropic just received an investment of US$35B with a US$380B post-money valuation. And here is what fewer people are actually seeing: valuation is not real money. It can be reduced very quickly with just a few pieces of news. Everybody is watching current SaaS companies' stocks go down because of the latest Claude releases. The opposite is just as true.

This is just an example; every other AI company is similar.

Bad Business Model

This is a continuation of the last topic.

First let’s get some context about today’s market.

There are two main supplies for this business: chips (processors and memory) and energy. Both are at all-time high prices.

First, chips. GPUs, TPUs, memory (RAM and SSDs), and custom chips. Every computer component currently has its price at a peak.

Let’s pick memory to analyze. There are only three major companies that manufacture memory chips. Micron announced that starting in 2026 it will not sell memory to personal consumers (Crucial brand), focusing on AI. Other factories have already sold their entire 2026 production as of December 2025 to major AI players.

This scenario produces strange situations in the market, such as RAM costing the same or even more than a high-end GPU for a personal computer (128GB DDR5 vs an RTX 5090 in this example).

Nonetheless, memory chips can be considered a commodity. They are used in many devices besides computers, such as cellphones, smartwatches, ATMs, cars, trucks, invoice machines, and so on. This could also increase the price of everything from food to personal computers. It is similar to what happens when oil prices increase, but on a smaller scale.

I don’t want to sound like an apocalypse messenger, but when prices go up for everything people start complaining a lot and governments need someone to blame.

The other supply is energy, which has a similar situation to chips. AI consumes a lot of energy to train and run models. The same energy that everyone needs. So if demand for energy increases, so does the price.

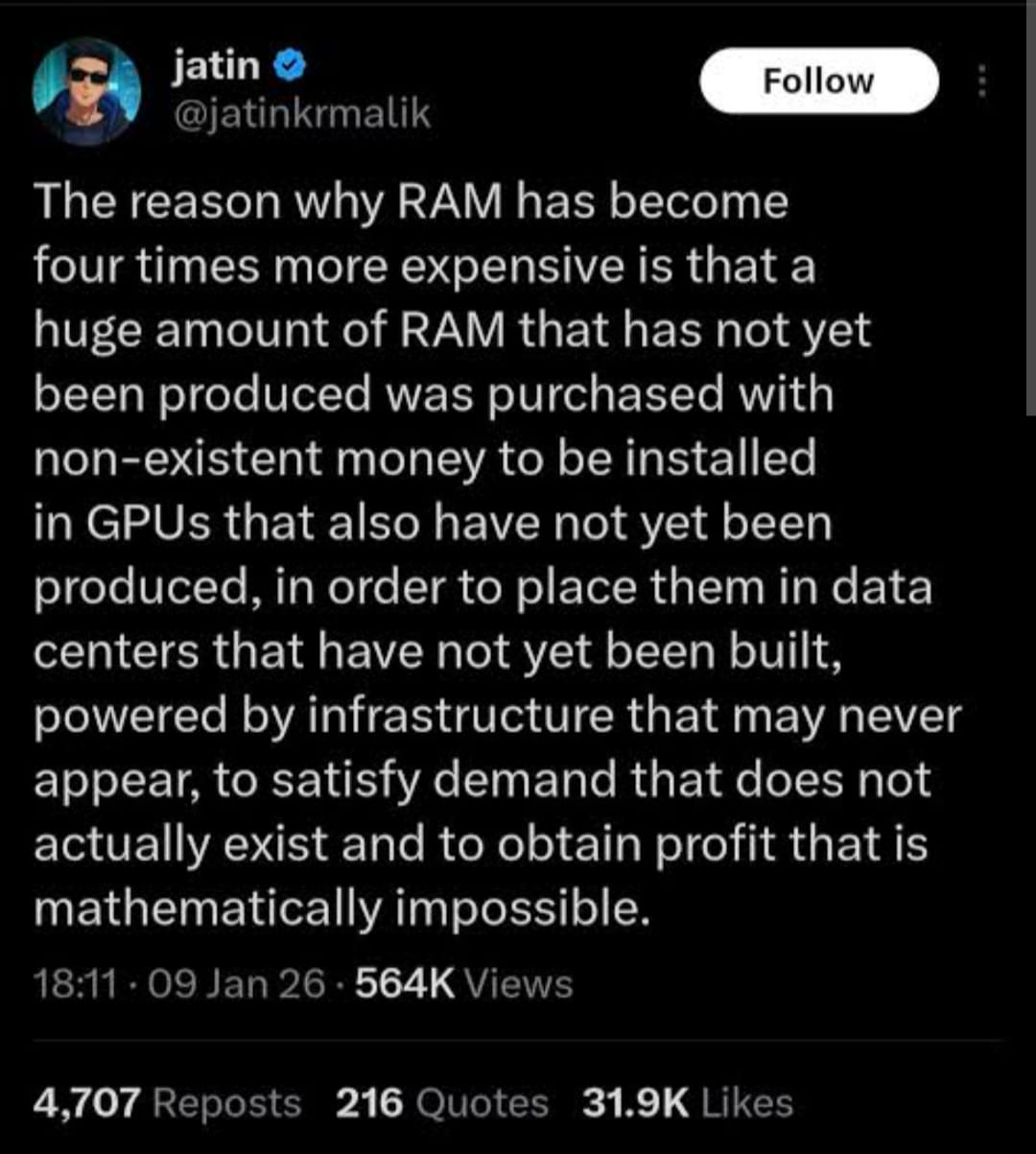

And here is a fun tweet that explains this point a little bit:

All of this makes the business model questionable.

Every AI company has to constantly launch and re-launch new models, optimize them, and deliver more value, even though they have never been profitable. At the same time, they continue buying expensive GPUs that lose half of their value in only two years.

So it’s like constantly building new datacenters because every two years a new GPU is released that makes training faster.

AI companies receive investors' money to buy GPUs that lose half their value every two years. How is this going to work? Also, every new chip tends to consume even more energy, so every two years the energy bill increases as well.

Let’s pretend the goal is to be the company that wins and captures most of the market share. This is the same movement that Silicon Valley startups follow (use investor money to scale fast, capture market share, and then become profitable later), but the expenses never stop.

Eventually the winner will raise prices to become profitable. What will happen then? In my case, here in Brazil, I’m not willing to pay more than US$20 per month to use AI for coding. If it becomes more expensive, it may stop making sense in many markets. Not every country has the same purchasing power as the USA or European countries.

Finally, I saw a LinkedIn post saying that what every AI company focused on code is doing is kind of a scam. You pay for tokens that use computers to process a request that may or may not return what you asked for, and they are not responsible for the result. It is like a casino: you press the button, maybe you win, maybe you lose, and you keep paying the machine.

I don’t think it is a scam itself, but the comparison makes sense. AI companies sell tokens that may not work.

This is very different from traditional cloud services where the service returns exactly what you requested with a very low error rate. This error rate is much lower than AI hallucinations.

Someone might say it depends on the prompt you use, but this is still not what these companies advertise.

Consider this situation: you create some code and open a PR. Claude has an automation to review it for $15. It says there is an issue to fix. You run a different model and it says there is no issue. You close Claude’s comment.

You just lost $15 with no real progress.

There is no guarantee about what AI will return. If there is no value, you simply lost money and these companies are not responsible.

Anyway, all this information is just to start a discussion about how to evolve this business model into something actually profitable and sustainable.

No Data to Train

We are seeing some very good open-source projects struggling because of AI.

The owner of TailwindCSS laid off 75% of its engineers because people are no longer buying their services and instead just asking AI. The founder of curl removed the bug bounty program because he was receiving many fake AI-generated vulnerability reports.

These are examples of how AI is changing the open-source ecosystem.

So I was thinking: what if software licenses change somehow? If AI companies need to pay for the data they use for training, how will that work?

There are already some cases where these companies lost lawsuits in the USA to book authors because their books were used without permission. Could this also apply to programming?

For me, this is a low financial risk, but I wouldn’t be surprised if open-source projects start building “walled gardens”.

The biggest risk is that fewer new open-source projects will appear. If there is no way to make money building open source, then probably nothing will be open source. And if there is no open source, then all training data will belong to someone or some company that you either pay for or cannot access.

Individuality and Creativity

Following the previous topic, all the software we have today was built following human creativity. Of course there is bad code everywhere, but the entire software infrastructure we rely on today—databases, web servers, operating systems, compilers, and more—was built through individual and collective creativity over time, including AI itself.

Nowadays it seems that everything is Node or Python web apps with React and microservices running on serverless clouds. Not only because of the unicorn startups since 2010, but also because these languages have very good support in LLMs.

Many people seem to have forgotten the old reliable executable software that runs for decades in businesses, firmware, and embedded systems.

To clarify, Claude Code and Codex work with C and C++, but the problems you encounter with these languages are very different from those in JavaScript and TypeScript. Also, the number of developers working in these so-called low-level languages is much smaller.

Certainly good software engineers will continue building good software and there will always be space for creativity, but I have a feeling we are losing the touch.

We have several examples in computer history of what could be called genius software engineers who used creativity to overcome hardware limitations and run computationally expensive software.

For example:

John Carmack revolutionized 3D game engines, allowing games like Doom to run on very slow hardware in 1993, without AI or even Google.

Chris Sawyer built RollerCoaster Tycoon in 1999 using almost only x86 assembly. He did this because he wanted performance that other languages at the time could not provide, simulating many individual guests each with their own behavior.

Another example is FFmpeg, created around 2000. It became one of the most important tools for media processing and was written mostly in C and assembly by humans to extract the maximum performance possible.

These are examples, not the rule, but they illustrate that engineering is largely driven by creativity.

Finally, I think many developers are losing touch with the code itself. I see many new engineers who cannot develop anything without AI. I have also noticed this in myself when Cursor or Claude generates many changes and I sometimes lose track.

Even experienced engineers sometimes become a little lazy when reviewing AI changes in areas they don’t fully understand. They tend to accept whatever the AI produces.

Just to add one point: using AI for search and explanations is great. I use it a lot this way.

New Chip Designs

This is a point that may actually move in the opposite direction (good for AI).

Over the last five years we have seen companies designing new chip architectures beyond x86.

Apple released its fifth generation of Apple Silicon (M5). Qualcomm released the second generation of its X Elite CPUs.

Although they cannot compete with high-end x86 CPUs and GPUs in raw performance, they are extremely efficient in power consumption and this could be a game changer.

Other companies are also trying to design more energy-efficient chips. Intel, for example, is working on new high-bandwidth memory designs that consume less power.

However, designing new chip architectures is expensive (billions of dollars) and takes years (many) to mature. But it is definitely something that could help AI grow and possibly become profitable.

Privacy

Right now everybody uses Cursor, Copilot, Claude, Gemini, and ChatGPT in the cloud.

This may not be a concern today because of performance, but how can we guarantee that these companies are not collecting source code from many private projects?

This is a valid concern. Not every company wants to share its proprietary code with third parties that have no responsibility for it.

Local LLMs might be the solution, but with current high hardware prices this may be expensive for many people and companies.

TL;DR – Conclusion

It is possible that this business model will collapse at some point, so we need to wait and see how things evolve.

Many people say an “AI winter” is coming soon, and maybe it is. But I don’t think LLMs will disappear.

In summary:

- AI in coding is here to stay.

- The business model is not mature yet and will change significantly in the coming months (or even weeks).

- Most AI companies will probably fail.

- There is a lack of knowledge transfer to new engineers, and this needs to be addressed asap.

My guess is that AI will become the new search engine standard, and in the near future we will use local LLMs for coding.

AI has been around for decades in different areas (banks, marketplaces, chatbots…), but for the general public it has now arrived for good.